Robots.txt Generator by Alaikas—Create SEO Robots File Fast

Keywords and backlinks are not the only way to achieve search engine optimization. Technical SEO is also significant in enhancing the performance of the websites. A robots.txt file is one of the key technical aspects of SEO. The robots.txt generator by Alaikas makes it easy to generate this file in the shortest possible time and in an appropriate format, even without technical skills.

The robots.txt generator by Alaikas is a simple online tool that allows users to generate a robots.txt file to control how search engines crawl their websites. Whether you are a beginner or an experienced developer, this tool makes the process fast and easy.

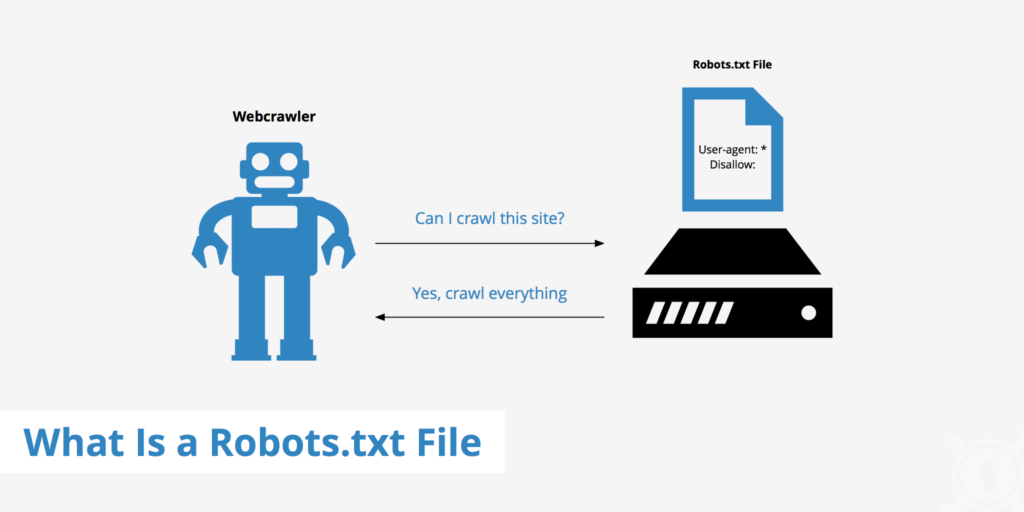

What is a robots.txt file?

A robots.txt file is a small text file placed in your website’s root directory. It tells search engine crawlers which pages they can access and which pages they should avoid.

For example, you may want to block:

- Admin pages

- Login pages

- Private folders

- Duplicate content

- Testing pages

Using the robots.txt generator by Alaikas, you can create these rules without writing code manually.

Why Use Robots.txt Generator by Alaikas?

The robots.txt generator by Alaikas is useful for improving website SEO and crawl efficiency. Here are some key reasons to use it:

1. Easy File Creation

No coding knowledge required. Just select options and generate your robots.txt file instantly.

2. Improve SEO Performance

Proper crawl management helps search engines focus on important pages.

3. Prevent Duplicate Content Issues

Block unnecessary pages from being indexed.

4. Save Crawl Budget

Search engines crawl limited pages. Robots.txt helps prioritize important content.

Features of Robots.txt Generator by Alaikas

The robots.txt generator by Alaikas offers helpful features such as:

- Simple and user-friendly interface

- Fast file generation

- No technical knowledge required

- SEO-friendly structure

- Free online tool

- Works for all websites

These features make it a valuable tool for website optimization.

How to Use Robots.txt Generator by Alaikas

Using the robots.txt generator by Alaikas is very simple:

- Visit the Alaikas robots.txt generator tool

- Select search engine bots

- Add pages or folders to block

- Generate robots.txt file

- Download and upload to your website root directory

Once uploaded, search engines will follow your instructions.

Who Should Use This Tool?

The robots.txt generator by Alaikas is ideal for:

- Website owners

- Bloggers

- SEO experts

- Web developers

- Digital marketers

- E-commerce store owners

Anyone managing a website can benefit from using this tool.

Benefits of Using Robots.txt Generator by Alaikas

✔ Improve crawl efficiency

✔ Better SEO performance

✔ Prevent indexing unwanted pages

✔ Easy to use

✔ Free tool

✔ Save time and effort

These advantages make the robots.txt generator by Alaikas essential for technical SEO.

FAQs

1. What is the robots.txt generator by Alaikas?

It is an online tool that helps create robots.txt files to control search engine crawling.

2. Is robots.txt important for SEO?

Yes, robots.txt helps search engines crawl important pages and avoid unnecessary ones.

3. Is the robots.txt generator by Alaikas free?

Yes, it is a free tool available for website owners and developers.

4. Where should I upload the robots.txt file?

You should upload it in your website’s root directory (example: domain.com/robots.txt).

5. Can beginners use the robots.txt generator by Alaikas?

Yes, the tool is beginner-friendly and requires no technical knowledge.

6. Does robots.txt block pages from Google?

Yes, robots.txt can prevent search engines from crawling selected pages.

7. Can I edit robots.txt later?

Yes, you can update and regenerate the file anytime using the tool.

It’s easy to overlook technical SEO, but a properly configured robots.txt file can make a noticeable difference in site performance. I appreciate the reminder that even small adjustments like this can help prevent duplicate content issues and optimize crawl efficiency.